Introduction

The integration of the OpenAI API with Microsoft Excel is revolutionizing the way businesses interact with their data. One of the most exciting developments is the creation of Excel-based chatbots, which have the potential to automate various functions such as:

- Customer Service: Automate customer support by creating a chatbot that can handle frequently asked questions, troubleshoot issues, and provide guidance on product usage.

- Sales: Develop a sales chatbot that can assist customers in finding the right product, offer personalized recommendations, and even process orders directly from Excel.

- HR and Recruitment: Design a chatbot to automate the screening process, answer candidate queries, and schedule interviews.

- Inventory Management: Build a chatbot to help users track inventory levels, place orders for restocking, and provide real-time updates on product availability.

- Knowledge Management: Create a chatbot that can quickly retrieve information from internal databases, streamlining the process of accessing company data.

In this blog post, we will provide a detailed, step-by-step walkthrough for creating a chatbot within Excel using the OpenAI API. This guide is designed for novice Excel users, with easy-to-follow instructions and ready-to-use code snippets.

Note – Please be aware that this solution involves interacting with OpenAI’s API. I encourage users to familiarize themselves with OpenAI’s data usage policy (https://platform.openai.com/docs/data-usage-policy) and take necessary precautions to ensure the privacy and security of their data.

Step 1: Setting up the OpenAI API

First, sign up for an API key on the OpenAI website (https://beta.openai.com/signup/) and follow the instructions to get started.

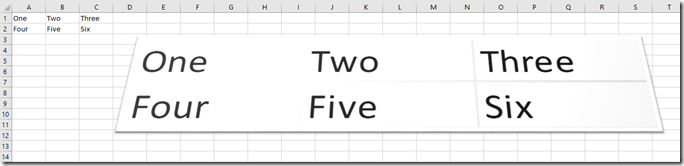

Step 2: Creating a Chatbot Interface in Excel

- Open a new Excel workbook.

- In cell A1, type “User Input”.

- In cell B1, type “Chatbot Response”.

- Format the cells as desired for better readability.

Step 3: Enable Excel Developer Tab

- Click on “File” > “Options” > “Customize Ribbon”.

- Check the box next to “Developer” in the right column and click “OK”.

Step 4: Add a Button to Trigger Chatbot

- Click the “Developer” tab.

- Click “Insert” and select “Button (Form Control)”.

- Draw the button below the “User Input” and “Chatbot Response” cells.

- Right-click the button, select “Edit Text,” and type “Submit”.

Step 5: Write VBA Code for OpenAI API Integration

- Right-click the “Submit” button and click “Assign Macro”.

- In the “Assign Macro” window, click “New”.

- Copy and paste the following code into the VBA editor:

Option Explicit

Private Sub Submit_Click()

Dim userInput As String

Dim chatbotResponse As String

' Get user input from cell A2

userInput = Range("A2").Value

' Call OpenAI API to get chatbot response

chatbotResponse = GetOpenAIResponse(userInput)

' Display chatbot response in cell B2

Range("B2").Value = chatbotResponse

End Sub

Function GetOpenAIResponse(userInput As String) As String

Dim objHTTP As Object

Dim apiKey As String

Dim apiUrl As String

Dim jsonBody As String

Dim jsonResponse As String

Dim json As Object

' Set your OpenAI API key here

apiKey = "your_openai_api_key"

' Set OpenAI API endpoint URL

apiUrl = "https://api.openai.com/v1/chat/completions"

' Create JSON request body

jsonBody = "{""messages"": [{""role"": ""system"", ""content"": ""You are a helpful assistant.""}, {""role"": ""user"", ""content"": """ & userInput & """}], ""max_tokens"": 50, ""n"": 1, ""stop"": ""\n"", ""temperature"": 0.7}"

' Create an HTTP object

Set objHTTP = CreateObject("MSXML2.ServerXMLHTTP.6.0")

' Send the request to OpenAI API

With objHTTP

.Open "POST", apiUrl, False

.setRequestHeader "Content-Type", "application/json"

.setRequestHeader "Authorization", "Bearer " & apiKey

.send jsonBody

jsonResponse = .responseText

End With

' Create a JSON parser object

Set json = CreateObject("MSXML2.DOMDocument.6.0")

json.LoadXML jsonResponse

' Extract chatbot response from JSON and return it

GetOpenAIResponse = json.SelectSingleNode("//content").Text

End Function

- Replace

your_openai_api_keywith your actual OpenAI API key. - Save the VBA code by clicking the floppy disk icon or pressing

Ctrl+S. - Close the VBA editor by clicking the “X” in the top-right corner.

Step 6: Add Microsoft XML Reference for JSON Parsing

- Press

Alt+F11to open the VBA editor. - Click “Tools” > “References” in the menu.

- Scroll down and check the box next to “Microsoft XML, v6.0” (or the latest version available).

- Click “OK” to close the “References” window.

Step 7: Test Your Excel Chatbot

- In cell A2, type a question or statement for your chatbot (e.g., “What is the capital of France?”).

- Click the “Submit” button.

- The chatbot’s response should appear in cell B2.

(Optional) Step 8: Add Context Awareness to Your Excel Chatbot

- In your Excel workbook, create a new sheet and rename it to “ConversationHistory”.

- In the VBA editor, modify the

Submit_Click()subroutine by adding the following lines of code before the linechatbotResponse = GetOpenAIResponse(userInput):

' Save user input to ConversationHistory sheet

With Sheets("ConversationHistory")

.Cells(.Cells(.Rows.Count, 1).End(xlUp).Row + 1, 1).Value = "User: " & userInput

End With

3. Modify the GetOpenAIResponse() function by adding a new argument called conversationHistory:

Function GetOpenAIResponse(userInput As String, conversationHistory As String) As String

4. Update the Submit_Click() subroutine to pass the conversation history when calling the GetOpenAIResponse() function. Replace the line chatbotResponse = GetOpenAIResponse(userInput) with the following code:

' Get conversation history

Dim conversationHistory As String

conversationHistory = Join(Application.Transpose(Sheets("ConversationHistory").UsedRange.Value), " ")

' Call OpenAI API to get chatbot response

chatbotResponse = GetOpenAIResponse(userInput, conversationHistory)

5. Modify the JSON request body in the GetOpenAIResponse() function to include the conversation history. Replace the line jsonBody = "{""prompt"": ""Chatbot: " & userInput & "\", ""max_tokens"": 50, ""n"": 1, ""stop"": ""\n"", ""temperature"": 0.7}" with the following code:

' Create JSON request body with conversation history

jsonBody = "{""prompt"": """ & conversationHistory & " Chatbot: " & userInput & "\", ""max_tokens"": 50, ""n"": 1, ""stop"": ""\n"", ""temperature"": 0.7}"

6. Finally, update the Submit_Click() subroutine to save the chatbot’s response in the “ConversationHistory” sheet. Add the following lines of code after the line Range("B2").Value = chatbotResponse:

' Save chatbot response to ConversationHistory sheet

With Sheets("ConversationHistory")

.Cells(.Cells(.Rows.Count, 1).End(xlUp).Row + 1, 1).Value = "Chatbot: " & chatbotResponse

End With

With this optional modification in place, your Excel chatbot will now maintain a conversation history in the “ConversationHistory” sheet, which will be used to provide context-aware responses. The chatbot will be able to give more accurate and relevant answers based on the user’s previous interactions.

Conclusion

You now have a working Excel-based chatbot powered by the OpenAI API. This guide has provided you with a simple, step-by-step approach to creating a chatbot interface within Excel and integrating it with the OpenAI API using VBA code. While this example uses basic functionality, you can expand and adapt the code to create chatbots for various applications, such as customer service, sales, HR, inventory management, and knowledge management. With the power of the OpenAI API and the familiar Excel interface, businesses can create powerful, user-friendly tools to enhance operations and boost productivity.

This blogpost was created with help from ChatGPT Pro.